-

Notifications

You must be signed in to change notification settings - Fork 47

Processing Big Data with Titanoboa

Thanks to its distributed nature, titanoboa is well predisposed for Big Data processing.

You can also fine-tune how performant and robust your Big Data processing will be - based on your job channel configuration - if you are using a job channel that is robust and highly-available so will be your big data processing.

If on the other hand you are using a job channel that does not persist messages you will probably have more performant set up (but less robust). ...And of course you can combine these two approaches (it is perfectly possible to use multiple job channels and core systems in one titanoboa server.

Ultimately, if you use SQS queue as a job channel, your processing can have unlimited scalability while being most robust - your titanoboa servers can be located across multiple regions and availability zones!

But lets not get ahead of ourselves and start from the beginning:

There are two workflow step supertypes that are designed exactly for purpose of processing large(r) datasets:

-

:map - based on a sequence returned by this step's workload function, many separate atomic jobs are created

-

:reduce - performs reduce function over results returned by jobs triggered by a map step

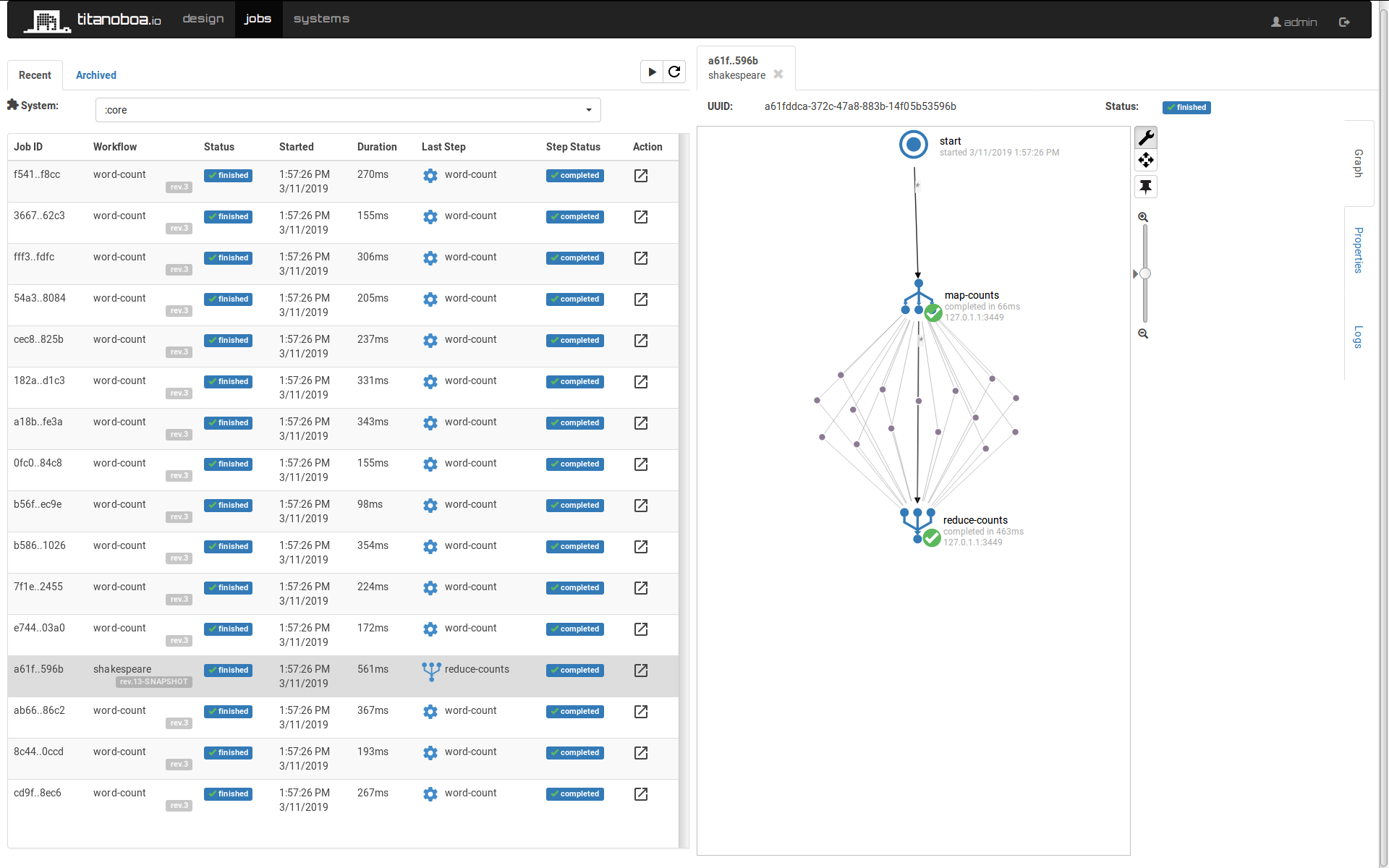

Here we can create a simple workflow that will count each word's occurrence in works of William Shakespeare.

First map step will simply return a list of files to process - and will trigger a separate workflow "count-words" for each of them. If we wanted to be more elaborate, we could further break down each play into smaller pieces, but for now this will do.

Then there is the "word-count" workflow: it is a simple workflow with a single step, it will parse a file passed onto it and count word's frequencies in it and return it as a map.

The last step is a reduce step: It takes all the results for "word-count" workflow and performs the reduce function over it - in this case it simply uses addition to sum all the counts together.

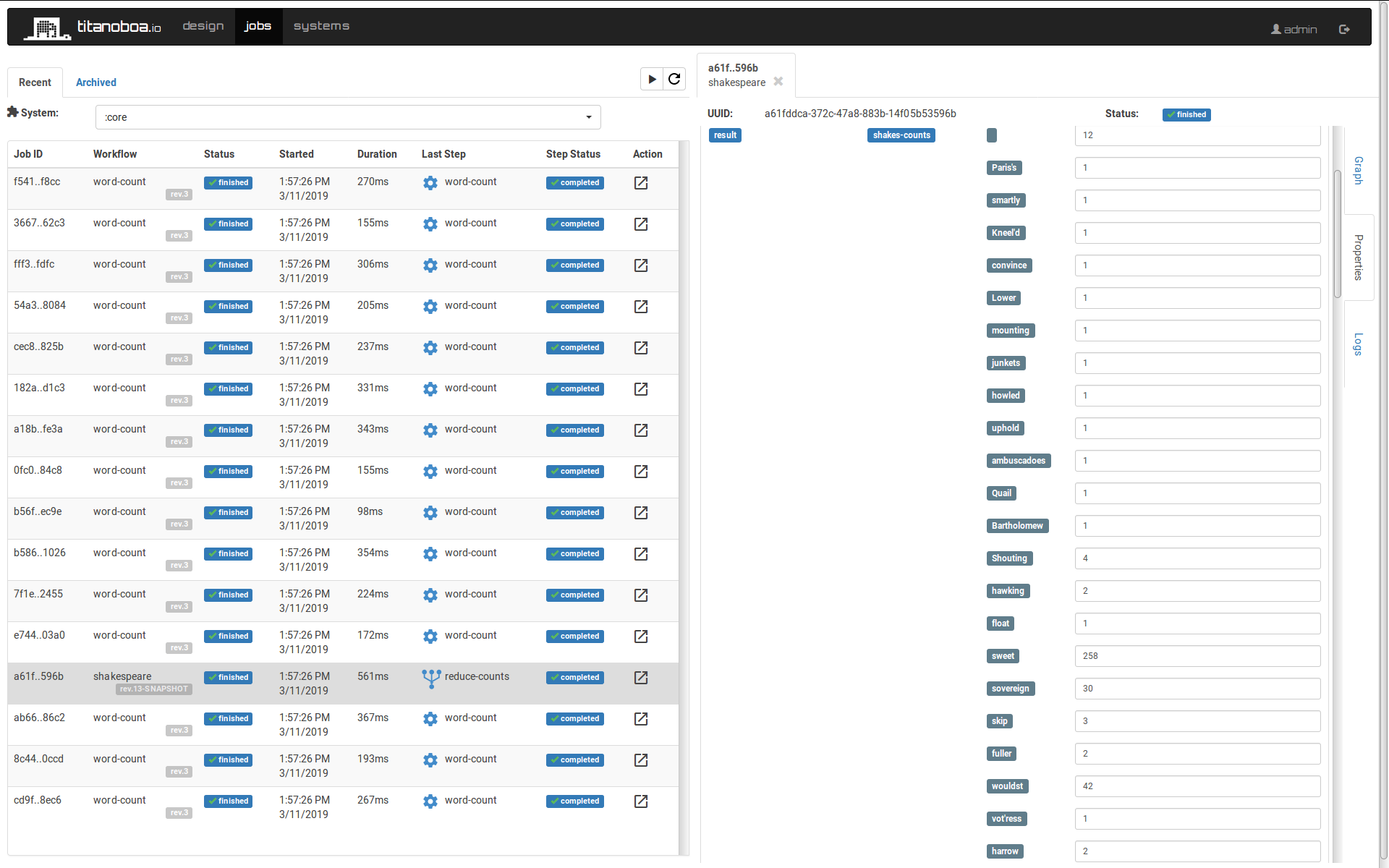

As a result we get an (unordered) map of words' frequencies as a part of the job's properties: